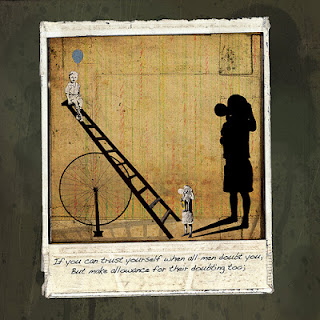

If you can keep your head when all about you customers and colleagues are losing theirs and blaming it on you then, with apologies to Kipling, you'll stand a decent chance of being comfortable in tech support.

I often think about the crossover between support and test and I've recruited people with support experience to work as testers more than once. I've also noted before that I have my test team watch all support traffic and it's common in many companies for testers to be brought in for advice and to test fixes for issues that start as support tickets. But being the owner of a hard-to-reproduce high-value support issue, without a buffer between you and the customer who is experiencing the pain, being the one responsible for working out what the issue is and identifying a workaround adds piquancy and urgency and pressure to the diagnostic task.

This kind of thing is often more constrained than the average test mission. In this scenario you know that there's an issue. You even know some of the symptoms, but you usually have a distorted lens through which to view it, a delay on your interactions with it ... If you can wait and not be tired by waiting ... restrictions on the questions you can reasonably ask and an understandably limited supply of patience on the part of the customer who frankly just wants the thing to work and who has their own deadlines, usually pressing, which are the reason they've had to open your application again for the first time in months. You also have to split your time between looking for a solution and looking for a workaround. Prioritisation and timeliness are key.

In one case I can recall, a customer was having difficulties with a file we'd supplied which, when applied to their installation, caused a fatal error. There was a known issue with files of this kind being corrupted in transfer or deployment and, using Occam's Razor, we initially explored that as the most likely cause - without success.

With the customer's approval, and the aim of getting a quick win for them, we tried some sledgehammer workarounds such as reinstallation and a change of host machine. I also suggested a somewhat horrid fix that the customer understood would work - with some compromises - but decided not to take up immediately.

The customer thought that it was probably a build issue - they had just upgraded - but I was less certain because I had set up a local shadow which was running with the same file the customer had (confirmed by md5sum) with no ill effects. Creating a local copy of the customer environment is often a sensible - if potentially expensive - tactic although ensuring that you've matched them in all significant respects is a challenge in its own right, given that you don't yet know what is significant.

Regardless of the speculation, the mission now was to get either a workaround or a fix, or both, in short order. I started with the idea that the issue was environmental and explored ways to provoke the problem. In the absence of full information about the customer setup I made assumptions about it and tried to provoke the kinds of effects that locale differences can show, such as problems with file encodings.

By working back from the symptoms I found some issues that exhibited the same behaviour and then spoke to a developer with expertise on the customer platform for pointers about how to provoke those issues directly. He was able to finesse my bug reports but we weren't able to get to the customer issue itself. If you can trust yourself when all men doubt you, But make allowance for their doubting too ...

At the same time, I looked for historical data in bug reports (remember don't just search the open ones) and previous support tickets. I spoke to other members of the support team about a locale issue with another customer at around the same time - but they were clearly unrelated and would have different solutions.

The initial customer report contained several overlapping issues from their own attempts to diagnose the problem and we maintained a dialogue about those, progressively filtering out the ones that we could resolve or at least explain and that appeared not to have a bearing on the main problem.

At this point I asked whether we could have direct access to their installation and the customer was able to provide it, which unusual is but enormously helpful when available. I was able to switch from the meta problem of trying to set up an environment to enable the issue and dive straight into investigating the cause of a problem I could now provoke at will.

I first tried some high-level stuff such as installing different JVMs and running some of our software components by hand to narrow down the area in which the problem might exist. Then I returned to the environment, repeating some of my earlier experiments to see if they gave the same responses on the customer machine. I also did a direct eyeball comparison of all configuration options that I thought might be related to the issue between the customer machine and our local shadow - and some that weren't.

Changing tack again, I inspected our source code, deoxidised my rusty Java and wrote a validator for the file based on the library our software uses. When that failed to show issues I altered my validator to be more like our software but again that showed no issues. This wasn't a disaster, just more data.

While your time is important to your company, the customer's time is important to them, their company and by extension your company. You have to be focussed on getting value for every experiment you carry out ... If you can fill the unforgiving minute with sixty seconds' worth of distance run.

To try to understand the problem space more I reverted to manual work and tried a bunch of different files - not created for this customer, that I knew would not work in this environment but which I had used previously in other installations internally - and I found that under some circumstances I could get further in the interpretation of the file before encountering an error. Exploiting this vector I crafted some more variants of the file and tried them in turn. One resolved the issue.

So now we had a workaround for the customer and a target for the dev team. With knowledge of the change to the file that caused a critical effect on the installation, one of the developers built me an instrumented version of the software with focussed debug around the change. It confirmed enough details to permit further code inspection, informed by the research I'd done, to identify a subtle software issue that required particular environmental settings, particular content in the file and certain temporal dependencies.

Exploratory testing techniques are invaluable in these sorts of cases. The kinds of heuristics and approaches that Rapid Software Testing espouses - factoring; logical vs lateral thinking; plunge in and quit; random fire; critical thinking; questioning your premises; focus/defocus; asking what if? - can keep giving you options to try. You have to be prepared to iterate, to revisit, to expand and contract your search, to investigate all available resources (people, documentation, code inspection) and assimilate them.

Stakeholder interaction is critical too. Live support can be stressful; you have to think on your feet, be professional at all times, provide rationale for the steps you are asking the customer to perform. During an extended support interaction, giving the customer some options is usually appreciated and keeping the customer informed and demonstrating effort and progress, even if only in narrowing down the possibilities, can provide reassurance. You also need to take your experience and learn from it, to feed your gut for next time round. With hindsight, I could have found this issue faster, more directly. I'll bear that in mind in future.

If you can dream - and not make dreams your master; If you can think - and not make thoughts your aim ... If you're a tester looking for a challenge, and to hone your skills, you could, with further apologies to Kipling, do worse than swinging by tech support for a spell, my son.

Image: http://flic.kr/p/7FVs91

Comments

Post a Comment